HPE Ezmeral ML Ops

DevOps speed and agility for machine learning

HPE Ezmeral ML Ops

A solution that brings DevOps-like agility to the entire machine learning lifecycle.

Quote Request DatasheetOperational Machine Learning at Enterprise Scale

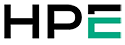

Bring DevOps-like speed and agility to ML workflows with support for every stage of the machine learning lifecycle: from sandbox experimentation with your choice of ML/DL frameworks, to model training on containerized distributed clusters, to deploying and tracking models in production.

A Container-Based Solution for the ML Lifecycle

Standardize processes across the ML lifecycle to build, train, deploy, and monitor machine learning models.

Model Building

Containerized sandbox environments with a variety of machine learning and deep learning applications and tools

Sandbox environments with any preferred data science tools—such as TensorFlow, Apache Spark, Keras, PyTorch and more—to enable simultaneous experimentation with multiple ML or deep learning (DL) frameworks.

Model Training

Scalable training environments with secure access to big data

On-demand access to scalable environments—single node or distributed multinode clusters—for development and test or production workloads. Patented innovations provide highly performant training environments—with compute and storage separation—that can securely access shared enterprise data sources on-premises or in cloud-based storage.

Model Deployment

Flexible, scalable, endpoint deployment

HPE Ezmeral ML Ops deploys the model’s native runtime image, such as Python, R, H2O, and so forth, into a secure, highly available, load-balanced, and containerized HTTP endpoint. An integrated model registry enables version tracking and seamless updates to models in production. Autoscaling from HPE Ezmeral ML Ops dynamically scales nodes for scoring engines.

Model Monitoring

End-to-end visibility across the ML pipeline

Complete visibility into runtime resource usage such as GPU, CPU, and memory utilization. Ability to track, measure, and report model performance along with third-party integrations track accuracy and interpretability.

Collaboration

Enable DevOps-like processes with code, model, and project repositories

Project repository and GitHub integration of HPE Ezmeral ML Ops provides source control, eases collaboration, and enables lineage tracking for improved auditability. The model registry stores multiple models—including multiple versions with metadata—for various runtime engines in the model registry.

Security and Control

Secure multi-tenancy with integration to enterprise authentication mechanisms

HPE Ezmeral ML Ops software provides multitenancy and data isolation to ensure logical separation between each project, group, or department within the organization. HPE Ezmeral ML Ops integrates with enterprise security and authentication mechanisms such as LDAP, Active Directory, and Kerberos.

Hybrid Deployment

Build, train, and deploy models either on premises, in the cloud, or in a hybrid model

HPE Ezmeral ML Ops runs on-premises on any infrastructure, on multiple public clouds (Amazon Web Services, Google Cloud Platform, or Microsoft Azure), or in a hybrid model, providing effective utilization of resources and lower operating costs.

The Challenges to Operationalizing ML Models

Much like pre-DevOps software development, most data science organizations today lack streamlined processes for their ML workflows. It may seem like a straightforward solution to use DevOps tools and practices for the ML lifecycle. However, ML workflows are very iterative in nature and off-the-shelf software development tools and methodologies will not work. HPE Ezmeral ML Ops is one of the few solutions to address the challenges of operationalizing ML models. Public cloud service providers offer disjointed services, and users are required to cobble together an end-to-end ML workflow. Also, the public cloud may not be an option for many organizations with workload requirements that require on-premises deployments due to considerations involving vendor lock-in, security, performance, or data gravity.

Complete ML Lifecycle Coverage

The HPE Ezmeral ML Ops solution supports every stage of ML lifecycle—data preparation, model build, model training, model deployment, collaboration, and monitoring. HPE Ezmeral ML Ops is an end-to-end data science solution with the flexibility to run on-premises, in multiple public clouds, or in a hybrid model and respond to dynamic business requirements in a variety of use cases.

HPE Ezmeral ML Ops platform architecture

Key Benefits

Faster time-to-value: You can manage and provision development, test, or production environments in minutes as opposed to days; and instantly onboard new data scientists with the preferred tools and languages without creating siloed development environments.

Improved productivity: Data scientists spend their time building models and analyzing results rather than waiting for training jobs to complete. HPE Ezmeral ML Ops helps ensure no loss of accuracy or performance degradation in multitenant environments. It increases collaboration and reproducibility with shared code, project, and model repositories.

Reduced risk: It provides enterprise-grade security and access controls on compute servers and data. Lineage tracking provides model governance and auditability for regulatory compliance. Integrations with third-party software provide interpretability. High availability deployments help ensure critical applications do not fail.

Flexibility and elasticity: You can deploy on-premises, cloud, or in a hybrid model to suit your business requirements. HPE Ezmeral ML Ops autoscales clusters to meet the requirements of dynamic workloads.

Faster Time to Value for AL / ML

HPE provides data science teams with one-click deployment for distributed AI / ML environments and secure access to the data they need.

ML Solution

HPE Ezmeral ML Ops

A software solution that extends the capabilities of the HPE Ezmeral Container Platform to support the entire machine learning lifecycle and implement DevOps-like processes to standardize machine learning workflows.

Containers

HPE Ezmeral Container Software Platform

Software platform designed to run both cloud-native and non-cloud native applications in containers.

Benefits

![]()

Transform non-cloud native apps without re-architecting.

![]()

Build an application once and deploy anywhere.

![]()